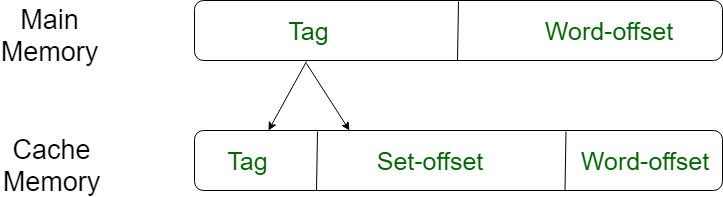

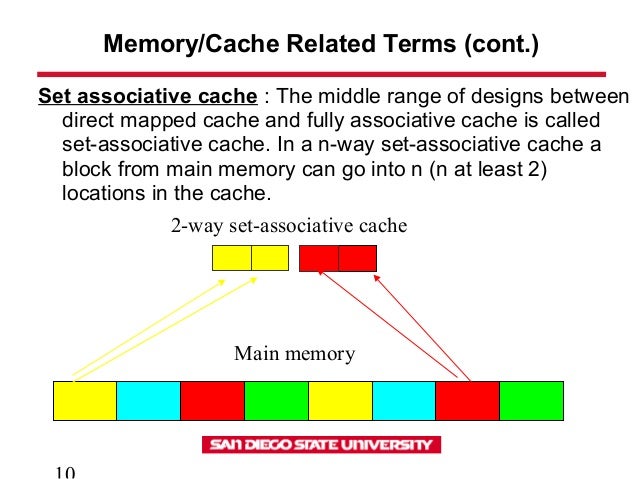

But there are advantages to these types of caches. There will be evictions and bringing in new memory blocks. When consecutive instructions fetch memory blocks that map to one index or one set, direct mapped cache and set associative cache will experience more conflicts. So, the three instructions above will lead to the allocation of three memory blocks under set 0. So it can allocate up to 4 memory blocks to the same index without eviction. When each of these instructions gets executed, a new memory block is brought into the cache memory but there is no need to evict the memory block since the memory block can be placed anywhere in the cache memory provided that the cache has invalid cache lines.Ī 4-way set associative cache has 4 cache lines per each set. Now let’s look at how a fully associative cache with 128 cache lines and 64-byte cache line size behaves. This program results in poor performance when a direct mapped cache is used. Again the next instruction, which requires a memory block at address 32’h100, triggers cache line eviction and memory block fetching. Since address 32’h80 maps to index 0, the existing valid cache line needs to be evicted to bring in the new memory block. Execution of the second statement would need a memory block at address 32’h80 to be brought into the cache. To execute the first statement memory block is brought from address 0 in the main memory to index 0 of the level 1 cache. Let’s consider a direct mapped cache with 128 cache lines and a 64-byte cache line size. Representation of a memory block in main memory and cache memory. This brings down the memory access time to a great extent.įigure 6. In the next consecutive iterations, all the data (a, a, a, …a) the processor needs will be in the level 1 cache. Let’s assume that in the first iteration a memory block (64 bytes) with a element (4 bytes) is brought from the main memory to the level 1 cache. In every iteration, an element of an array (with data type ‘int’) gets incremented. Now, Let’s look at a for loop with 8 iterations. 5 bits would be needed to index into a set.įigure 5. Let’s consider the same cache in the above example to be 4-way set associative, meaning there would be 32 sets (128/4 = 32). So, a set associative cache needs a few bits to index in the address to represent the set. Within each set, cache mapping is fully associative. For example, a 4-way set associative cache has 4 cache lines in every set. In a set associative cache, every memory block can be mapped to a set and these sets might contain ‘n’ cache lines. (Source: Author) Set associative cacheĪ set associative cache is a combination of both direct mapped cache and fully associative cache. Cache address of fully-associative cache. So, apart from the 6 cache offset bits, the rest all would be tag bits.įigure 4. If the same cache in the above example was fully associative, then there would not be any bits required to index into any cache line. In a fully associative cache, a memory block can be placed in any of the cache lines. The rest of the bits are stored in the cache memory to identify the memory block. The next 7 bits in the address are used to map the memory block to a cache line (2^7 = 128). Within an incoming address to a cache, 6 bits are needed to address each byte in a cache line (2^6 = 64). This means the cache has 128 cache lines. For instance, let’s consider an 8KB cache with a cache line size of 64 bytes. In a direct mapped cache, a memory block can be mapped to only one possible location in the cache memory. There are three different types of cache mapping: direct mapping, fully associative mapping, and set associative mapping. As the program executes the working set shifts. If the working set can be kept in the cache the program will run faster. These instructions and variables are in the working set. Variables like ‘i’, ‘a’, ‘b’, ‘n’, and ‘c’ are used numerous times. In figure 2, instructions like comparing ‘i’ and ‘n’, incrementing ‘i’, adding ‘a’ to 1, etc are done multiple times. Also, it’s more likely that the processor will access the nearby byte soon. (Source: Author)Īccording to the principle of locality, when a processor accesses a byte of memory it is more likely that it will access the memory location again. So it is beneficial to have the data the processor needs nearer to it. If the data that the processor needs are nearer to the processor access time is less. Smaller and faster memories are kept closer to the processor.

Figure 1 shows different levels in the memory hierarchy. In a computer, the entire memory can be separated into different levels based on access time and capacity. In a memory system, memory access times are less when the data the processor needs are nearer to it.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed